« September 2007 | Main | November 2007 »

October 31, 2007

Halloween Costumes

My wife and I went to a costume party last weekend and for a costume we went as PC and Mac, from the Apple commercials. This matches our real computer usage at home. It's a pretty easy costume, she wore a t-shirt (couldn't find an unzipped sweatshirt to wear which would add to the authenticity) and an iPod on a lanyard around here neck. I wore a suit and also various Windows-logoed tchotchkes that I picked up at the company store (pen, zip cardkey holder, "Geek" button, etc). She also bought me a pocket protector. To clarify what we were aiming for, we both wore those "Hello my name is" tags with "PC" and "Mac" on them. It worked really well and is a pretty cheap costume if you already own a suit and an iPod.Which reminds me, if you search on "willy wonka cane" you still, to this day, come back with my description of how to make one as the first algorithmic result. Actually the second hit is pretty good also. Here's somebody selling one for $430, which is about $415 more than I spent on mine. "99% of the canes out there, are simple thin walled plastic tubes, with no taper to them. They have smooth surfaces, and are usually filled with Nerds. And they're usually topped off with a strange array of items, not limited to door stoppers, or even chair legs." Guilty!

Posted by AdamBa at 03:10 PM | Comments (1) | TrackBack

October 28, 2007

Sox Win!

I realized that I've been blogging since before the last time the Red Sox won the World Series. But I checked the archive for October 2004 and I didn't mention them winning. What I did talk about includes: debunking a Microsoft urban legend about people sending email from unlocked computers they discover; the imminent availability of Find the Bug; a contest at Bookpool related to the book; Howard Stern announcing his move to Sirius; bad interview questions; the Storm winning the WNBA championship; my dubiousness about personal podcasts (which I still have); my first link to Mini-Microsoft; Google opening their Kirkland office; a trip to the Legoland Master Builder session; the Channel 9 Guy carved in a pumpkin; and various election musings. I guess it was a fairly eventful month.My brother was reading a book called The Mind of Bill James. The book doesn't really talk much about James's experience with the Red Sox (I gather James doesn't discuss that publicly) but it does have a description from his wife, Susie, of the scene after the Red Sox won in 2004:

"Out of the crush of people, Theo Epstein emerged. he gave us a big bear hug. He was totally soaked with champagne and beer. My stellar memory from the World Series came moments later. I hadn't met John Henry before. Bill introduced us after they had exchanged congratulations. John poked his index finger repeatedly into Bill's chest and said, 'You're a World Champion.'

John was pulled away, and Bill and I walked around the field, meeting and talking with people. At one point, Curt Schilling and Pedro Martinez came dashing across the field, trophy in Pedro's hand. My favorite player, David Ortiz, was being interviewed feet away from us. Bill and I noticed people scooping up some of the dirt from the base paths, so we followed suit, stuffing a few handfuls into my jacket pocket."

I assume Bill James was there again tonight. My congratulations to the Red Sox. One of my sons is a huge baseball fan but is only 8, so he missed the glory days of the Mariners. Luckily he's also a big Red Sox fan so he has gotten to enjoy their recent success.

Posted by AdamBa at 08:31 PM | Comments (0) | TrackBack

October 27, 2007

Pie Underground

"Hmm", you may be thinking to yourself, "Hasn't it been a couple of years since the Seattle Post-Intelligencer proposed a wacky solution for replacing the 520 bridge?" And you would be right! Luckily the rode to the rescue a couple of days ago. This time they were flogging variant 3, subsection D, which is "a tunnel of some sort". I think it's best if we just roll the image first:

So what you've got here is a tunnel which drops down from I-5 to below the water level, and also includes an underwater highway interchange, and then magically surfaces in the middle of Union Bay to connect to a bridge. This was designed by Danish people, who brought us IKEA and Volvos, or something, so it wouldn't be one of those clunky islands that dopey American bridge builders toss together whenever they build a bridge-tunnel (the connector islands for that one are about 200 yards wide, which is roughly half the width of the opening from Union Bay to Lake Washington). Instead it would just slip into the water, sort of like the Incredible Hulk roller-coaster at Universal Orlando.

There's a very interesting but subtle part of the article, which was explained to me by my brother, who works for the New York City Department of Transportation. This quote "Tunnels were not among the options featured in a draft environmental impact statement released last fall by the state Department of Transportation" is followed later by this lukewarm quote from the WSDOT project manager: ""We're interested in what COWI comes up with." Why is he so dubious? Well, their draft EIS did not include the tunnel. But if they have to consider the tunnel, they have to re-do the draft EIS. So just adding this to the alternatives they have to consider, no matter how quickly it gets tossed aside as absurd, is going to set the project back a year or two (this on top of the mandatory 18-month delay due to the mediation process that was imposed by the Legislature). I hate this stuff because it reinforces this weird belief that government is slow and inefficient. But really, most of the time government is slow and inefficient because it has to take the time to listen to clueless citizens with ideas like this tunnel. Then people wonder why it takes 15 years to build a bridge...oh right, must be that slow and inefficient government.

The P-I, inspired by all the "you are idiots" comment posted to the original article, responded with a "We are not idiots, really" editorial to back up the article. Let's see..."It might not be feasible. It might be too expensive. Then again, it might be neither of those things." It's hard to argue with that. You could say the same thing about my plan to build giant catapults on either side of the lake. "It would also be an interesting departure from the contentious four-lane/six-lane bridge debate that has brought us to the point of requiring outside mediation." I never thought of that! Because a contentious four-lane/six-lane TUNNEL debate would be much more constructive--especially since you can't build the tunnel equivalent of wider pontoons to accommodate future expansion, as you can with a bridge. "What's the harm in waiting for the Danish engineering firm to do its work and then decide whether a freak-out is warranted?" I don't know. What's the harm in waiting for aliens to land and build the bridge for us? And I won't even get to the part of the editorial where they relate Danish fiscal policy to design quality, mostly because I don't understand it.

Gahh, I know the Danes built the Great Belt and invented Lego, but does it really matter that a Danish company is investigating it? It's a hopeless idea, can we please just punt it and get on with building the six-lane bridge, which is the obvious answer, isn't really that complicated, would be routed through the same spot as the current bridge with only a small widening of the right-of-way, and is what we are eventually going to build once all this nonsense plays out? Thank you.

Posted by AdamBa at 05:26 PM | Comments (1) | TrackBack

October 24, 2007

Interviewing Doctors vs. Interviewing Programmers

A while ago I blogged about a NYer article by Jerome Groopman discussing how doctors diagnose patients and comparing that to how developers diagnose bugs. The article was actually an excerpt from Groopman's book How Doctors Think.This got me wondering about the related question of doctors interview other doctors who are looking for a job. Since the work doctors do has some similarities to what programmers do, then perhaps the interview process also has some similarities. So whenever I've seen a doctor recently, either on a medical visit or socially, I've asked them how they would interview a new doctor. In particular, would they ask questions that were the equivalent of what Microsoft interviewers tend to ask programmers: "Can you tell me what an embolism is", "Let's roleplay where I'm a patient with a mysterious ailment and you diagnose me", "Can you do a suture in this piece of bok choy", etc.

The unanimous answer, which I was mostly expecting, was a resounding no. When doctors interview other doctors they ask questions to determine how the doctor would fit in and what is their general philosophy about medicine. They also spend a lot of time following up on their references, and it's likely that after they are hired they will watch them for a while--for example observe the first few babies they deliver. But they don't ask about their specific medical knowledge.

The reason is that after a doctor has gone to medical school, done their residency, and passed their boards, you can assume that they know the basic of medicine, and know how and when to research the parts they don't know. And of course this points out one of the big gaps in software engineering, which is that we don't have any kind of certification process people go through before they can call themselves a software engineer. So, we are reduced to asking question that suss out whether someone has the basic ability to do the job.

But at the same time, I think we also make it harder for ourselves than it needs to be. We tend not to check references, and we tend to discount prior work experience, and there's really no reason to do that. We could even watch people do hands-on work before hiring them. We may not have standard certification we can rely on, but there's no reason to base the entire decision on a few hours of interviewing.

Posted by AdamBa at 10:02 PM | Comments (7) | TrackBack

October 20, 2007

Last Tweak of the RPN calculator

We had the first simple version and a more data-driven one. Now I figured out how to get a static class property, so I can make that part of the calculator generic. I still have to specify whether it is on [Math] or [Decimal], so add a couple new hashtables at the top:

$dfs = @{

"max" = "MaxValue" ;

"min" = "MinValue" ;

}

$mfs = @{

"e" = "E" ;

"pi" = "PI"

}

and then the code to invoke them, which replaces the hardcoded switch cases for "e", "pi", "min", and "max":

{ $dfs.$a } {

$s.Push(

[Decimal].GetMember($dfs.$a)[0].GetValue($null))

}

{ $mfs.$a } {

$s.Push(

[Convert]::ToDecimal(

[Math].GetMember($mfs.$a)[0].GetValue($null)))

}

What's declared in the hashtables above is probably all the static properties you need; [Math] doesn't have any others, and if you look at the ones from [Decimal] (which you can see with "[Decimal] | get-member -static") it only has MinusOne, One, and Zero, which we don't need given how our calculator works (we can just use -1, 1, and 0 and have the regular [Decimal]::TryParse() pick them up).

OK, no more about this, at least for a while, I promise.

Posted by AdamBa at 08:39 AM | Comments (0) | TrackBack

October 18, 2007

Random Slashdot Stuff for Auction on eBay

In honor of its 10th anniversay, Slashdot is having a charity auction of a few items on Ebay, proceeds to benefit the EFF. The current bids:

- $1225.00 (25 bids) for your own @slashdot.org email address.

- $257.52 (17 bids) for the case (plus some internals) from the first server to host slashdot.org.

- $1076.00 (23 bids) for having your URL plugged in the auction follow-up story on the site.

- $51.00 (10 bids) for a fire-damaged copy of "Watchmen" that belonged to a Slashdot founder.

- $676.00 (19 bids) for a random collection of Slashdot merch (t-shirts, hats, etc).

- $2000.00 (34 bids) for a very low Slashdot UID (which would represent to others that you had signed up for Slashdot in the very early days of the site).

One of the things that has always fascinated me about celebrity is the way it can be used to create value from nothing. For example, if you are a famous baseball player and you sign a blank piece of paper, it suddenly has value--from out of thin air. If you could get $50 for an autograph and signed 100 autographs a day, you could earn almost $2 million a year just from that (I used my nifty RPN calculator for that one!). Of course you would flood the market for your autograph etc. but still it's impressive.

The Slashdot charity auction shows a bit of this. The server case, certainly, is pure celebrity effect; the thing belongs in a scrap heap, and it's hard to imagine what the winning bidder would do with it besides exhibiting in their house and saying "d00d" a lot (until their wife/girlfriend intervenes and redirects it back on the path to the scrap heap). In about 1991 I was in a snowboard shop in Whistler and they were selling a used board from a famous snowboarder (the name "Sean Kearns" leaps unbidden to my mind, but that might be wrong). It was selling for $800, much more than the new boards were selling for, but it had a sign on it saying "This snowboard is a piece of junk, it's cracked and delaminated, but you'll pay for it because it's Sean Kearns's [or whoever's] old board!" And it probably did sell.

Some of the others are reasonable; the Slashdot swag you could at least wear, if not derive $676 of use from; a plug on slashdot is worth something; and "Watchmen" is a great story. The @slashdot.org email address could show you are a cool frood and maybe get you a job or a date. I do wonder about the low UID. It gives you no extra functionality from just signing up tomorrow and getting a high UID, but in the world of Slashdot it does connote status. So again, it's value that was created from nothing due to the success of the site.

Posted by AdamBa at 01:25 PM | Comments (1) | TrackBack

October 17, 2007

Hacking on the PowerShell RPN Calculator

I couldn't resist "improving" the calculator by making it more generic. I put quotes around "improving" because it is possible to overdo something like this. Right now the code is a bit harder to read than the earlier version but it also is much more extensible, so I think it's OK. It has four hashtables maping operators to .NET functions; there are four because it distinguishes between functions on the Math class and functions on the Decimal class, and between methods that take one parameter and ones that take two. I would make that data-driven also if I tried, but that might be overkill. In fact I could make it generically take any unknown token it finds and try to invoke a matching method on the Math or Decimal classes...I also haven't quite figured out how to get the static properties programmatically, so I had to hardcode in e, pi, max and min. This may be due to bugs in PowerShell 1.0; I know there is a bug where you should be able to write:

$add = [Decimal]::Add

$add.Invoke(2,3)

but it doesn't work. That's not exactly the same as getting the value of a static property, but maybe there's a related issue, or maybe I just can't figure out how to do it right.

Anyway, this is the code right now:

$s = new-object System.Collections.Stack

$n = new-object System.Decimal

$df1 = @{

"neg" = "Negate"

"floor" = "Floor"

"ceil" = "Ceiling"

"round" = "Round"

}

$df2 = @{

"+" = "Add" ;

"-" = "Subtract" ;

"*" = "Multiply" ;

"/" = "Divide" ;

"mod" = "Remainder"

}

$mf1 = @{

"cos" = "Cos" ;

"sqrt" = "Sqrt" ;

"exp" = "Exp"

}

$mf2 = @{

"^" = "Pow" ;

"log" = "Log"

}

foreach ($a in $args) {

switch ($a) {

{ $df1.$a } {

$s.Push(

[Decimal].GetMethod($df1.$a,@([Decimal])).Invoke(

$null,

@($s.Pop())))

}

{ $df2.$a } {

$temp = $s.Pop()

$s.Push(

[Decimal].GetMethod($df2.$a,@([Decimal],[Decimal])).Invoke(

$null,

@($s.Pop(),$temp)))

}

{ $mf1.$a } {

$s.Push(

[Convert]::ToDecimal(

[Math].GetMethod($mf1.$a,@([Double])).Invoke(

$null,

@([Decimal]::ToDouble($s.Pop())))))

}

{ $mf2.$a } {

$temp = [Decimal]::ToDouble($s.Pop())

$s.Push(

[Convert]::ToDecimal(

[Math].GetMethod($mf2.$a,@([Double],[Double])).Invoke(

$null,

@([Decimal]::ToDouble($s.Pop()),$temp))))

}

"e" { $s.Push([Convert]::ToDecimal([Math]::E)) }

"pi" { $s.Push([Convert]::ToDecimal([Math]::PI)) }

"max" { $s.Push([Decimal]::MaxValue) }

"min" { $s.Push([Decimal]::MinValue) }

"dup" { $s.Push($s.Peek()) }

{ [Decimal]::TryParse($a,[ref]$n) } { $s.Push($n) }

}

}

$s

(Weird random fact: when I removed the "e" from the variable name $temp, Movable Type returned an error trying to post this. In fact just including the t m p word anywhere seems to mess it up.) It gets a bit deep in the indenting; I decided that the calls to GetMethod() would not be worthy of their own indent because they were resolve before the end--that is, the closing parenthesis for those calls happens before the chain of ))) at the end of the multi-line statement.

Because it mixes Math and Decimal it doesn't always work up to the size of Decimal, which is 2^96-1. So something like:

./rpn2 2 95 ^ dup 1 - +

actually throws an exception because the number winds up being an overflow due to rounding; you can compare:

./rpn2 2 95 ^

which uses the Math and returns

39614081257132200000000000000

to

./rpn2 max 2 /

which returns the slightly smaller

39614081257132168796771975168

Actually that last number is 2 ^ 95 exactly, when it should really be 2 ^ 95 - 1, but I guess there is some rounding or something at the limit.

A tip of the hat to Bruce Payette for some help getting this working.

Posted by AdamBa at 11:07 PM | Comments (2) | TrackBack

October 15, 2007

RPN Calculator in PowerShell

I was auditing a course that we teach and one assignment was to work on an RPN calculator. The instructions say that you CANNOT use the .Net Stack class, which makes sense because then the problem becomes too easy. But then I got to thinking and realized that if I DID use the Stack class, it would be pretty simple to make an RPN calculator in PowerShell. So I did, while sitting in the back of class, in about 10 minutes:

$s = new-object System.Collections.Stack

$n = 0

foreach ($a in $args) {

switch -regex ($a) {

"[+-\/\*^]" { $t = $s.Pop() }

}

switch ($a) {

"+" { $s.Push($s.Pop() + $t) }

"-" { $s.Push($s.Pop() - $t) }

"*" { $s.Push($s.Pop() * $t) }

"/" { $s.Push($s.Pop() / $t) }

"^" { $s.Push([System.Math]::Pow($s.Pop(),$t)) }

"e" { $s.Push([System.Math]::E) }

"pi" { $s.Push([System.Math]::PI) }

"sqrt" { $s.Push([System.Math]::Sqrt($s.Pop())) }

{ [System.Int32]::TryParse($a,[ref]$n) } { $s.Push($n) }

}

}

$s

You can do cute things like (assuming it is called rpn.ps1):

./rpn 3 2 ^ 4 2 ^ + sqrt

Posted by AdamBa at 06:28 PM | Comments (1) | TrackBack

October 11, 2007

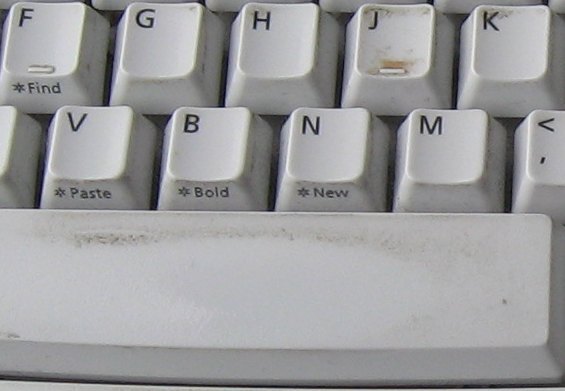

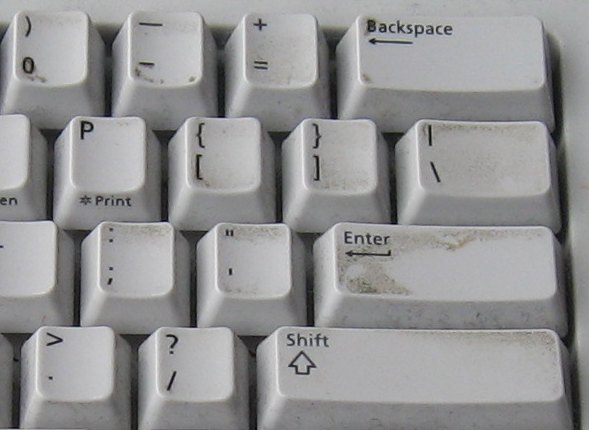

Washing My Keyboard

My keyboard at work developed some pretty nasty-looking growths when it was in storage for 4 months. I have a theory on this: our fingers deposit sketchy amoeba-like things on the keys while typing, but they also remove a certain amount of them at the same time. Keys that are pressed less frequently might get "cleaned" less often, but they also have fewer fungi to begin with. Thus there is a consistently small amount of goop on the keys, but it's constantly being refreshed so it never has time to turn into anything with medicinal properties.However, if you take a previously active keyboard and stick it in storage for 4 months, then there is an opportunity for a thousand mold spores to bloom. Which is basically what it looked liked after we moved into our new office and I unpacked my desktop computer (I had used only my laptop during the construction).

I had heard of people washing their keyboards in the dishwasher. It seemed worth trying and worst case was I destroyed the keyboard, but there are lots of keyboards lying around Microsoft. Based on advice I found on the Internet I washed it on a quick cycle (the "China/Gentle" one, to be precise) and turned off heated dry (I also washed the furnace screens at the same time). I used the standard Kirkland Signatures detergent that they sell at Costco.

The results were impressive from a cleanliness perspective. These are some before/after pictures (no need to say which is which):

But would it work? The initial "wash your keyboard" meme from whenever-it-was talked about prying off the keycaps and letting it dry for a week, but that seemed excessive. I kept the keys on and let it dry for 3 days in our house, turning it every 12 hours or so. At that point it seemed pretty dry so I plugged it in, but got only the "no keyboard" beeping when I booted. So I left it sitting in my office (which is air-conditioned) for 3 more days, and when I plugged it in then it seemed to work fine. Evidently 3 days is too short but 6 days is enough, and if you try it too soon it won't (in my sample size of one) do any permanent damage to keyboard or computer. Let's see, "Quartz glyph job vex'd cwm finks"...yup, looks good.

Posted by AdamBa at 10:18 PM | Comments (1) | TrackBack

October 09, 2007

Oh Boo-Hoo-Hoo

The curse of A-Rod lives on!!

Posted by AdamBa at 10:48 PM | Comments (1) | TrackBack

October 08, 2007

Bellingham Bay Marathon

They say that those who forget history are doomed to repeat it. I was thinking something of this sort yesterday morning as I lined up at the start of the Bellingham Bay Marathon. Then my thoughts were distracted when a signboard blew over next to me...but we'll get to that later.I wasn't particularly planning to run this race, but early this summer I was talking to a friend who was planning to run it...and three months later here I was. Long-time readers of this blog may recall my running the Seattle Marathon two years ago. During that race I was able to run only 20 miles ("only" applied here in the context of a marathon, not normal life) before I hit the wall and had to walk for a while, eventually finishing in 4 hours and 44 minutes. My training for Bellingham had gone a little better, including a final run in which I knocked off 21 miles in 3 1/2 hours, or precisely a 10 minute per mile pace. I also used to depend only on the water and energy drinks/food supplied on the course (not wishing to burden myself with any supplies while running), but now I have gone completely over to the side of carrying a belt with water bottles and electrolyte supplements--in my case I have an Amphipod running belt that can hold four 10-ounce bottles, plus has a little fanny pack on the front. I put a Gatorade/water mix in the bottles and eat Clif Shot Bloks along the way (I find them easier to eat than the "goo" food).

My one main goal for this race was to run the whole race without stopping. I figured if I did that I would definitely beat my old time, and had some hope of either doing 4:22 (ten minute miles) or at least 4:30. Unfortunately there was a slight twist thrown in, which was the weather. Gaze, if you dare, at this weather chart for Bellingham yesterday morning. Observe how the wind peaks between 8 am and noon (the race started at 8 am)--with such fascinating readings as 25.3 mph with 40.3 mph gusts at 8:53 am, and 29.9 mph with 43.7 mph gusts at 10:53 am. Then see how around noon, when the wind dies down somewhat, to under 20 mph, it starts to rain? So that was the weather we were faced with. And if you take a gander at this course map (PDF), you will see how parts of the course are quite near the water, and especially how the last 2 miles are run around a marina--no doubt very scenic on nice days, but feeling particularly exposed to the sideways rain when you arrive 4 hours into a race.

Bellingham is about 100 miles north of Seattle, so I drove up the day before and stayed in a hotel. I had a bad night of sleep--one of nights where you could swear you weren't sleeping at all, except sometimes you remember a brief dream you had. I definitely recall seeing the alarm clock next to the bad at least at half hour intervals. However I was buoyed by an article I had read somewhere which said the sleep you get the night before a race doesn't really affect you, it's more the two or three nights before that. In fact I felt fine in the morning, although I had some bags under my eyes. I tried finding a weather report on TV, but then just opened my window and watched the trees whipping around outside for a moment to get all the weather information I needed. It actually wasn't all that cold (high 50s), just windy.

How did it go? Well, the bad news is that with the weather being what it was, and me also running a bit slow, I wound up finishing the race in 4:47. So I was a couple minutes slower than the first time (I wore a t-shirt under a long-sleeve shirt, which was probably overdressing and made me sweat more than I should have, but it did feel good during the windy parts. I also had an old-school Mambosok hat on my head, which got many comments but was probably too warm). BUT there were some definite good things:

- I DID run the whole freaking thing without stopping...yes 4 hours and 47 minutes keeping my legs moving, even running up (and later down) a ridiculously steep hill (California where it hits Chuckanut Drive, if you know Bellingham), and other ups and downs of a course that was much hillier than I expected.

- I never felt any really strong desire to stop and walk, and I never really doubted that I would finish the race; even in the last few miles when it was quite unpleasant, the feeling was more of being annoyed at having to endure the environment (possible subconscious motivating factor: if I had started walking, I would have been out there even longer).

- Although I finished slightly slower, my splits were much more even: from 2:03 and 2:41 last time to (roughly, I didn't note the exact midpoint) 2:18 and 2:29 this time.

- I felt much better afterwards; I was much less crippled immediately after the race, and today I feel only slightly sore. My only real "injury" was to the second toe (which evidently is called the pointer toe, by analogy with the pointer finger) on both feet, where my nails were a bit long and my shoe a bit short, the result that the nail spent 26.2 miles getting jammed into the toe itself, leading to a somewhat gruesome sight which will hopefully cure itself without further medical intervention or loss of said nails.

- The course itself was very scenic, with much of it on unpaved trails through a forest, where the wind wasn't too bad and the running was pleasant (except when a branch blew out of a tree and landed on me). The whitecaps on the water were lovely to behold.

Posted by AdamBa at 09:14 PM | Comments (0) | TrackBack

October 05, 2007

The Microsoft Orchestra

I was readings blogs.msdn.com and somebody (don't have the reference, apologies if it is you) linked to an article about the Microsoft Orchestra. If you look at the photo of the orchestra you will see a "stout and friendly bear" type near the front, wearing a suit. That is Jim Truher, the conductor of the orchestra, who is a serious conductor (and singer). He also used to be a Program Manager on the PowerShell (nee Monad) team, so I worked with him for several years.The orchestra has a website, msorchestra.org (not to be confused with Mississippi Symphony Orchestra, which has the corresponding .com address). The orchestra is doing three performances this year, with each one performed once in the Building 34 Cafe and once at the Sammamish Foursquare Church. The public performances at the church (the ones in Cafe 34 aren't really private, but you would need somebody with a cardkey to get in) are on December 8, April 5, and June 14, which are all Saturdays, with the Cafe 34 performance on the preceding Monday.

If you are thinking of working at Microsoft and wonder "Would there be some place to continue playing my long-since-dusty [insert orchestra instrument] in a friendly, supportive environment?", then answer is definitely yes. Especially if you play a string instrument or percussion, which the Orchestra is evidently in need of. If you happen to do an interview loop on a Monday, you can stop by the Cafe 34 from 7-9 pm and listen to them rehearse.

Posted by AdamBa at 03:29 PM | Comments (1) | TrackBack

October 04, 2007

The Writing is on the Wall...

and the desk and the cubicles...One of the cool things about our new space is that a lot of the surfaces are writable with markers. For example, this is the table in our team area where we have meetings involving everybody on the team (it's covered with meeting notes that are mostly too fuzzy to read, and a picture of a guy with a top hat and cane that I doodled during a slow moment):

And this is the wall of my cubicle (they had an open house to show off the new space and a couple of my children decided to draw the "Important Notice" and a picture of a pie):

You can also draw on the translucent walls between rooms (it's the letters "EE", a bit hard to see; the walls are opaque enough that what you see in them is not the room on the other side, but rather a reflection of our space; the vaguely human shape in the corner is my reflection):

The part to the right of the corner is a door, which is clear glass and also writable. And there are sections of wall covered with some special surface (not sure if it is paint or wallpaper) that you can write on. It's also a good surface to project images on, so the newer conference rooms don't have pull-down screens for the projectors but instead have a section of magic wall. You can get so used to this "write anywhere" idea that some walls have signs on them that say something like "This wall is not for writing on".

Posted by AdamBa at 09:04 PM | Comments (1) | TrackBack

October 03, 2007

Fear of a Broken Build

One of the things that drives me slightly bananas at Microsoft is our obsession with not "breaking the build".Backstory: Ever since a time before I started at Microsoft, projects at Microsoft have used a central server to hold the master copy of the source code (this is variously known as a source code server or source repository). Individual developers will also have a copy of the code on their own machines, so that they can build private copies as they work on changes, but at some point they "check in" their changes to the repository, and those changes then become part of the master copy. Developers will also, as they see fit, perform a "sync" in which they update their local copy of the source with the current version from the repository. And almost every team will create an official build every day, where at an official build server syncs a copy of the current master source and builds it. People can run tests on "private" builds (that they built on their own machines) but it is these official builds that wind up being tested heavily, and eventually one of them (the last one) will be the one that we ship.

While it is perfectly normally to have a build fail on your own machine, as you fix typos and whatnot in your code, it is considered a cardinal sin to check in code that doesn't build properly. It will break the official daily build, and it will also break any other developers who happen to sync at a time when your bad changes are still polluting the repository. So it is bad bad bad to check in broken code, and teams have come up with various "incentives" to prevent this. For example, on some teams if you break the build you have to be the person who starts and babysits the official daily build, continuing to do so every day until some other sap breaks it (occasionally this would be made more punitive by requiring that the daily build be started at, say, 6 am; the severity of this punishment was unpredictable, since you never knew when somebody else was going to break the build and replace you. At this point such builds are almost always completely automated and don't require human coddling). In other cases people had to wear funny hats if they broke the build (I'm 100% serious).

This is sort-of OK, since you don't want people to break the build. But somehow we got on this obsession with not breaking the build. The phrase "breaking" makes it sound like you are breaking a crystal vase or something, that once broken a build might never be repairable. That's not true at all: these are just electronic bits that are breaking, and if somebody checks something in that doesn't build, the worst case is you revert their change (and possibly other related changes) and then poke them with a stick a few times and tell them to be more careful next time. More commonly, you just fix the break; a typical break involves one person checking in a change to the parameters to an API around the same time somebody else checks in a new call to that API. Such changes are usually obvious to fix and any developer or tester who notices the problem can fix them.

Yes, some breaks are due to carelessness, like just not compiling something, or failing to add a new source file to the repository at all (this is a classic: since the file is present on your machine, your local build will still work, but anybody else's will fail). And people did clever things like work for weeks, make a massive checkin (that didn't build), then immediately go on vacation, leaving others to divine their intent and pick up the pieces. But a lot of build breaks are somewhat predestined to happen and can be cleaned up pretty easily.

Yet we developed a culture at Microsoft to treat build breaks as so terrible that we had to go to great lengths to prevent them--lengths that when added up for every checkin on a project wound up far outweighing the damage from the occasional build break. Furthermore, the set of what constituted a "build break" grew as the definition of what an official build machine did grew larger. Many teams now build for x86 and x64, run static analysis, have a set of Build Verification Tests, etc. It's not that these are bad, but they are also not necessarily as deadly (in the short term) as a classic build break where the thing just won't compile and every developer who syncs is dead in the water until fixed. Yet what happened was all this stuff got added to the definition of "build break", and when you combine that with a prohibition on build breaks in the master copy, what you wind up with is every individual developer going through a lengthy process before each checkin, where everybody has to build for x86 and x64 (and a complete build of the whole system, not just what they have changed), run tests on their own machine, etc. Some teams even instituted a non-deterministic process in which you have to do a sync, then a clean build, then run tests...and then if somebody else checks in during that time, you have to start over again. The cure for build breaks winds up being much worse than the illness. And I mean just in terms of time spent, ignoring silly ideas like making the build breaker come in at 6 am every day until somebody else messed up.

It turns out there is a movement afoot in the industry that addresses some of this, under the somewhat misleading name Continuous Integration. CI is actually more about having a central repository and checking in often, which people at Microsoft already do, but along with it comes the notion of having a central server which does builds and tests and all that very frequently (on every checkin, if you want) and immediately notifies the team if there is a break. This is the part of CI that I'd like to see more of at Microsoft: people are free to checkin without a massive amount of work, secure in the knowledge that they will find out quickly if they broke something. It's not that they just checkin whatever they want with no verifying that it builds; it's more an attitude about empowering developers to decide on their own how much building and testing they need to do individually before they check in. A break is still treated as bad, in fact the entire team is supposed to stop whatever they are doing until it is fixed, but it is recognized as something that is not worth knocking yourself out to avoid, because in the end it's not too painful when it happens.

This is something of a return to the old days; if you read my story about breaking the build in front of Dave Cutler you will see that back then we had complete freedom to decide how much testing we did on our checkins. Obviously in that case I went too far (nobody would seriously suggest checking something in without at least compiling the changed files), and in some sense the overburdened checkin processes of today are paying for the sins of the past, committed by goofballs like myself. But technology is also helping here; back then there was a single master repository, so my build break (for the 30 seconds it existed) would have hit everybody in the team who did a sync then. Nowadays we have levels of repository, so when you checkin you are likely only checking in to a repository that affects a handful of developers. So in that situation, certainly, I think the Continuous Integration mindset is the way to go.

Posted by AdamBa at 04:31 PM | Comments (7) | TrackBack